Gesture recognition with intelligent camera

I am passionate about technology and robotics. Here in my own blog, I am always taking on new tasks. But I have hardly ever worked with image processing. However, a colleague’s LEGO® MINDSTORMS® robot, which can recognize the rock, paper or scissors gestures of a hand with several different sensors, gave me an idea: „The robot should be able to ’see‘.“ Until now, the respective gesture had to be made at a very specific point in front of the robot in order to be reliably recognized. Several sensors were needed for this, which made the system inflexible and dampened the joy of playing. Can image processing solve this task more „elegantly“?

From the idea to implementation

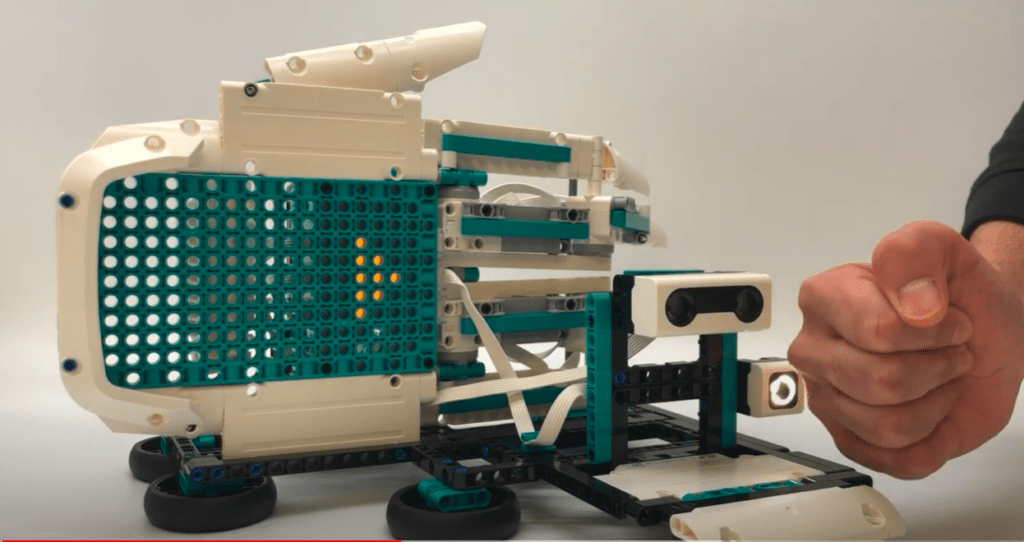

In my search for a suitable camera, I came across IDS NXT – a complete system for the use of intelligent image processing. It fulfilled all my requirements and, thanks to artificial intelligence, much more besides pure gesture recognition. My interest was woken. Especially because the evaluation of the images and the communication of the results took place directly on or through the camera – without an additional PC! In addition, the IDS NXT Experience Kit came with all the components needed to start using the application immediately – without any prior knowledge of AI.

I took the idea further and began to develop a robot that would play the game „Rock, Paper, Scissors“ in the future – with a process similar to that in the classical sense: The (human) player is asked to perform one of the familiar gestures (scissors, stone, paper) in front of the camera. The virtual opponent has already randomly determined his gesture at this point. The move is evaluated in real time and the winner is displayed.

The first step: Gesture recognition by means of image processing

But until then, some intermediate steps were necessary. I began by implementing gesture recognition using image processing – new territory for me as a robotics fan. However, with the help of IDS lighthouse – a cloud-based AI vision studio – this was easier to realize than expected. Here, ideas evolve into complete applications. For this purpose, neural networks are trained by application images with the necessary product knowledge – such as in this case the individual gestures from different perspectives – and packaged into a suitable application workflow.

The training process was super easy, and I just used IDS Lighthouse’s step-by-step wizard after taking several hundred pictures of my hands using rock, scissor, or paper gestures from different angles against different backgrounds. The first trained AI was able to reliably recognize the gestures directly. This works for both left- and right-handers with a recognition rate of approx. 95%. Probabilities are returned for the labels „Rock“, „Paper“, „Scissor“, or „Nothing“. A satisfactory result. But what happens now with the data obtained?

Further processing

The further processing of the recognized gestures could be done by means of a specially created vision app. For this, the captured image of the respective gesture – after evaluation by the AI – must be passed on to the app. The latter „knows“ the rules of the game and can thus decide which gesture beats another. It then determines the winner. In the first stage of development, the app will also simulate the opponent. All this is currently in the making and will be implemented in the next step to become a „Rock, Paper, Scissors“-playing robot.

From play to everyday use

At first, the project is more of a gimmick. But what could come out of it? A gambling machine? Or maybe even an AI-based sign language translator?

To be continued…