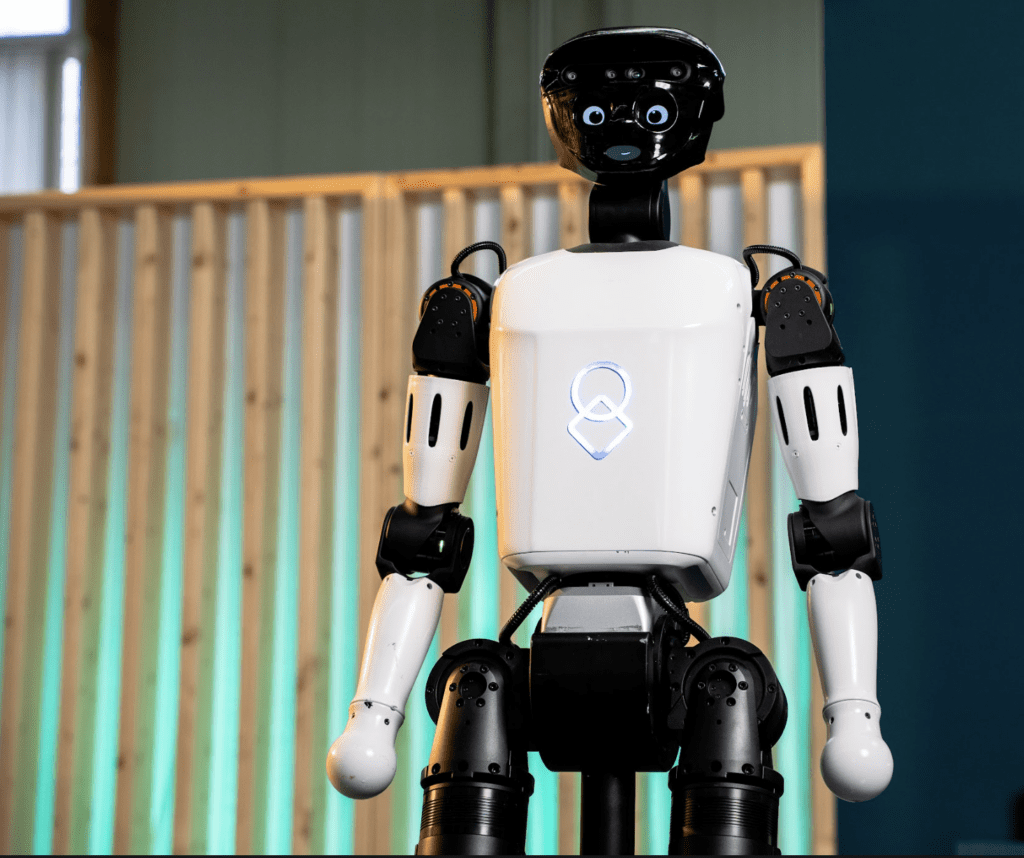

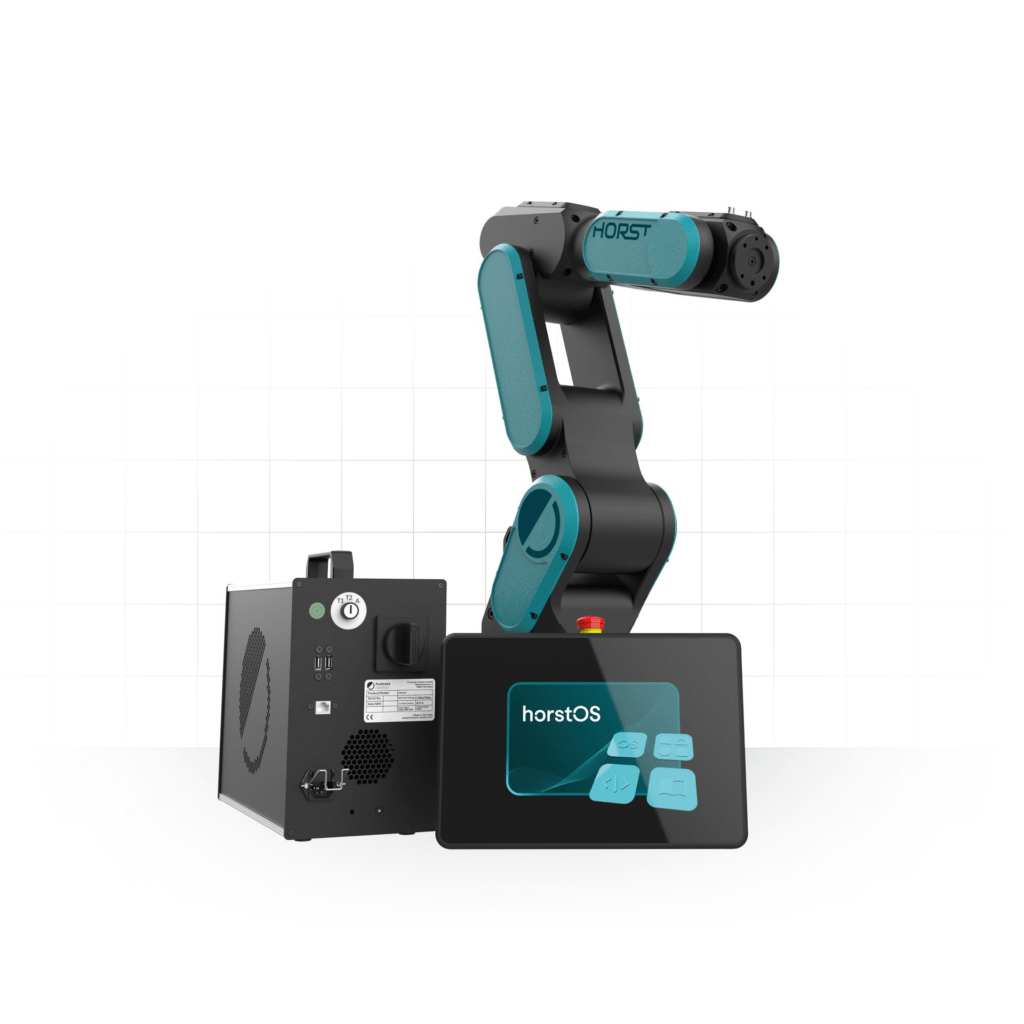

Konstanz, 10.4.2026 – fruitcore robotics bringt mit HORST600 G2 und HORST800 G2 zwei neue 6-Achs-Industrieroboter auf den Markt. Die zweite Generation der HORST-Plattform liefert bis zu 40 Prozent kürzere Taktzeiten, eine Verdopplung der Traglast und deutlich mehr Arbeitsraum. „Mit HORST600 G2 und HORST800 G2 machen wir die industrielle Robotik noch vielseitiger einsetzbar, leistungsfähiger und zuverlässiger. Durch Standardisierung, Einfachheit und KI-Einsatz sind wir zudem in der Lage, Roboterlösungen mit einem einzigartigen Preis-Leistungsverhältnis anzubieten“, sagt Jens Riegger, CEO von fruitcore robotics.

Industrieunternehmen stehen unter Druck: Der Fachkräftemangel verschärft sich, die Anforderungen an Produktivität und Flexibilität steigen. Automatisierung ist operative Notwendigkeit, doch die Umsetzung scheitert nach wie vor an Komplexität und Kosten. Genau hier setzt die G2-Generation an: flexiblere Integration, mehr Leistung, attraktiver Preis.

Einzigartige Lebensdauerkosten durch technologischen Vorsprung

Neben den Anschaffungskosten sind es heute vor allem die oft unabsehbaren, hohen Lebensdauerkosten, die Roboterautomatisierung uninteressant machen.

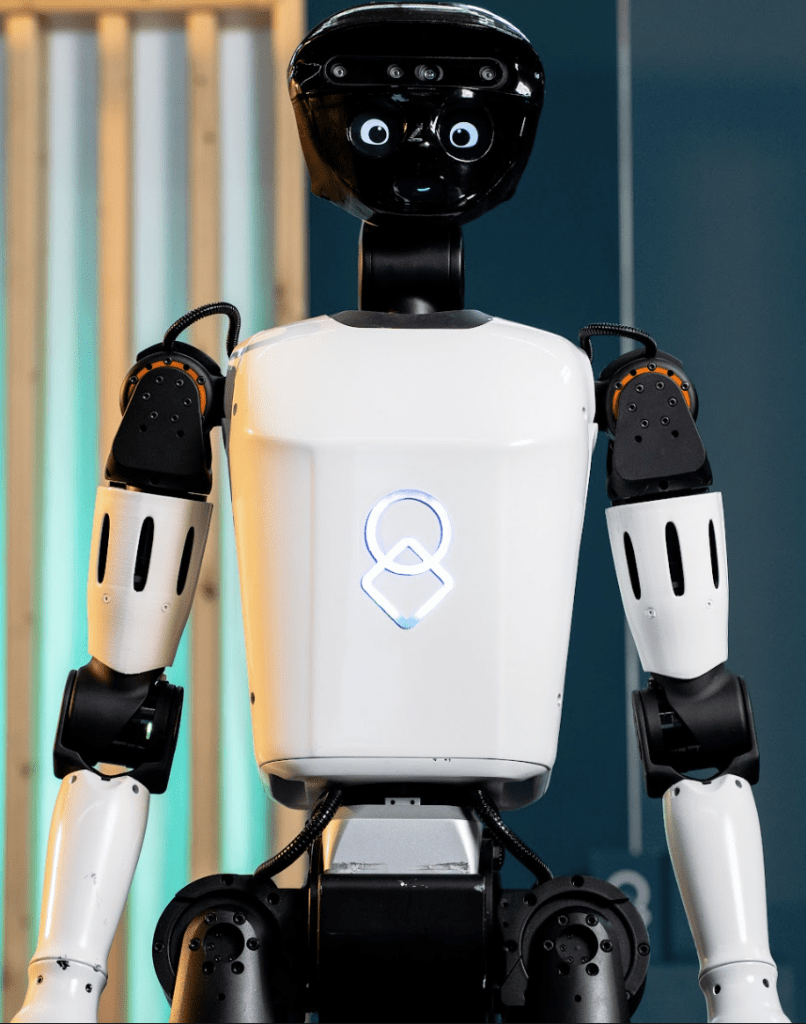

Die neuen G2-Roboter basieren auf einer überarbeiteten mechanischen Plattform mit Integralbauweise ohne Blechabdeckungen. Hierdurch wurden Reparatur und Wartung verbessert, indem wartungsrelevante Komponenten gut zugänglich sind und einfach ausgetauscht werden können. Die von fruitcore patentierte, abtriebsseitige Encodertechnologie sorgt weiterhin dafür, dass die Roboter ohne Nachteachen über die Lebensdauer präzise bleiben. Verstärkt wird dies durch neue Treiber, überarbeitete Lager sowie neue Präzisionsgetriebe mit bis zu 400 Prozent weniger Spiel.

Mit dem Launch der zwei neuen Modelle ist nun das gesamte Portfolio von HORST600 G2 bis HORST1500 G2 auf eine Lebensdauer von zehn Jahren im Drei-Schicht-Betrieb ausgelegt. Die Grundlage hierfür bietet der grundsätzlich andere Antriebsstrang der HORST-Roboter, der bis zu viermal länger hält als bei vergleichbaren Produkten. Mehr als tausend Roboter im industriellen Einsatz und fast ein Jahrzehnt an kontinuierlicher Weiterentwicklung ermöglichen fruitcore, diesen technologischen Vorteil in Form einer 6-jährigen Garantie auf den Antriebsstrang an seine Kunden weiterzugeben. Dies führt zu attraktiven Lebensdauerkosten sowie einer hohen Investitionssicherheit.

„Die G2-Generation ist ein technologischer Sprung, nicht nur ein Update. Wir haben Mechanik, Antriebe, Lagerungen und Getriebe von Grund auf überarbeitet. Das Ergebnis

sind Roboter, die in Sachen Steifigkeit und Dynamik den höchsten Standards entsprechen“, so Patrick Heimburger, Geschäftsführer von fruitcore robotics.

Anwendungen und technische Merkmale

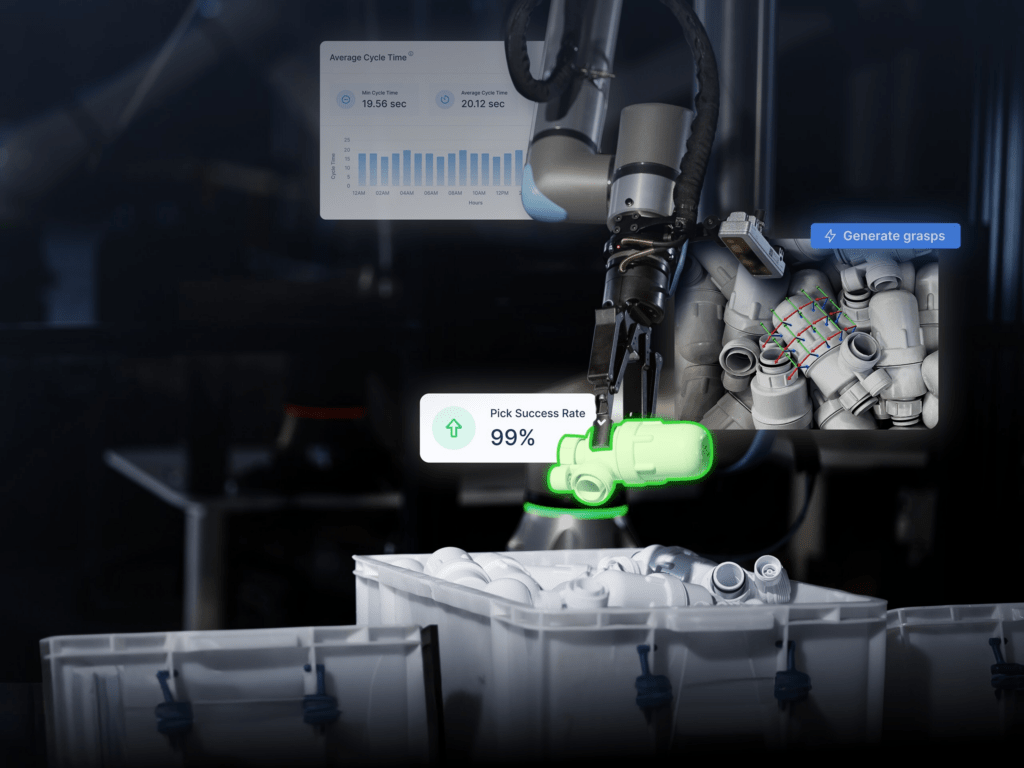

Ob Maschinenbestückung, Qualitätsprüfung oder Pick & Place: HORST600 G2 deckt mit 610 mm Reichweite, 7 kg Traglast und ±0,05 mm Wiederholgenauigkeit ein breites Anwendungsspektrum ab. HORST800 G2 geht einen Schritt weiter: 840 mm Reichweite und 6 kg Traglast erschließen Anwendungen, bei denen bisherige Modelle an ihre Grenzen stießen, beispielsweise beim tiefen Eingreifen in Maschinen auf kleinstem Raum. Beide Modelle lassen sich an Boden, Decke oder im Winkel montieren; beim HORST600 G2 ist zudem Wandmontage möglich. Durch einen größeren Arbeitsbereich bei kleineren Robotermaßen sowie durch die standardmäßige Zertifizierung nach Reinraumklasse ISO 6 (ISO 5 projektbezogen möglich) bieten beide Roboter noch flexiblere Einsatzmöglichkeiten. Auch anspruchsvolle Anforderungen in sensiblen oder rauen Umgebungen werden durch Schutzklasse IP65 an den Achsen 5 und 6 sowie IP54 an den anderen Achsen erfüllt. Auch die neuen Roboter von fruitcore robotics sind durch die Bediensoftware horstOS mit integrierten KI-Funktionen wie Sprachsteuerung schnell und flexibel einsetzbar.

Verfügbarkeit und Webinar: HORST600 G2 und HORST800 G2 sind ab sofort für ausgewählte Kunden verfügbar und werden ab Q4 2026 in Serie ausgeliefert. Am 28. April 2026 (11:00–12:00 Uhr) stellt fruitcore robotics beide Modelle in einem Webinar im Detail vor. Anmeldung über www.fruitcore-robotics.com.