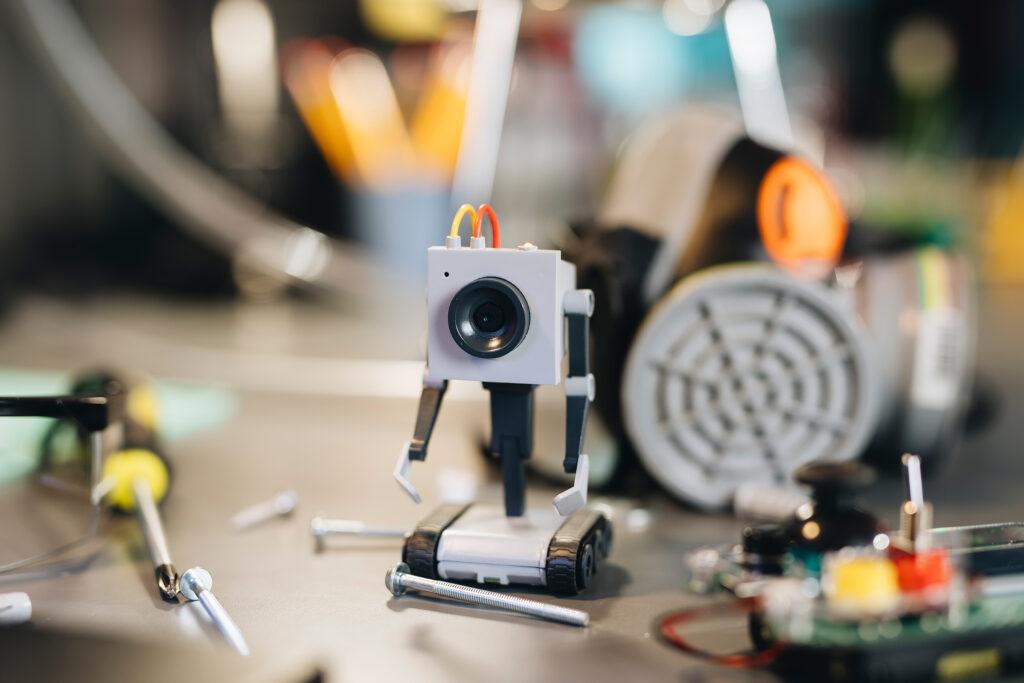

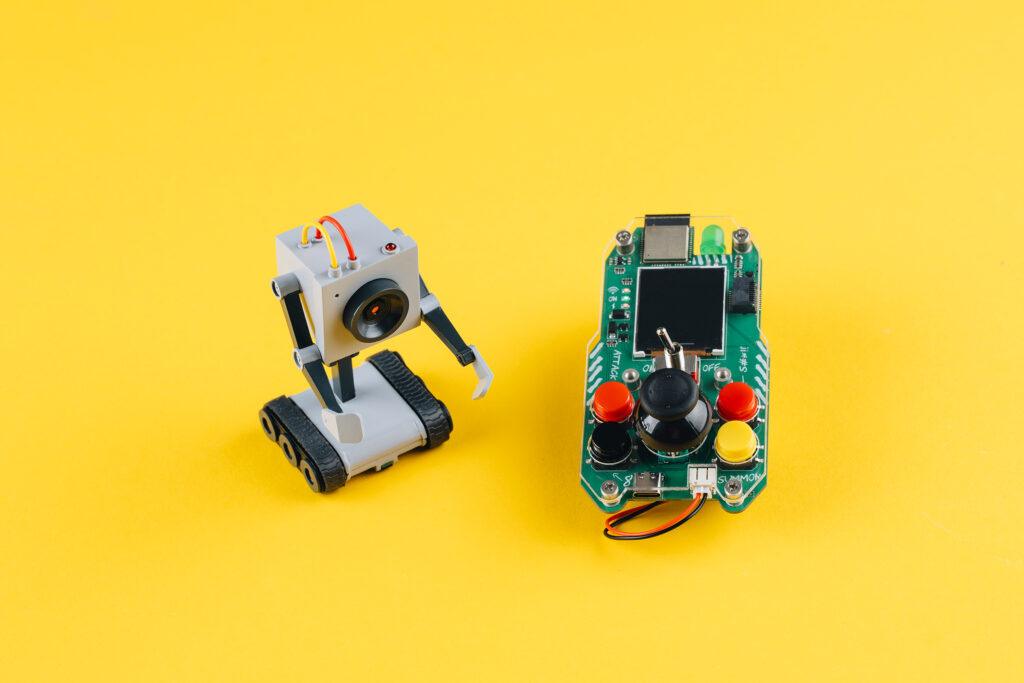

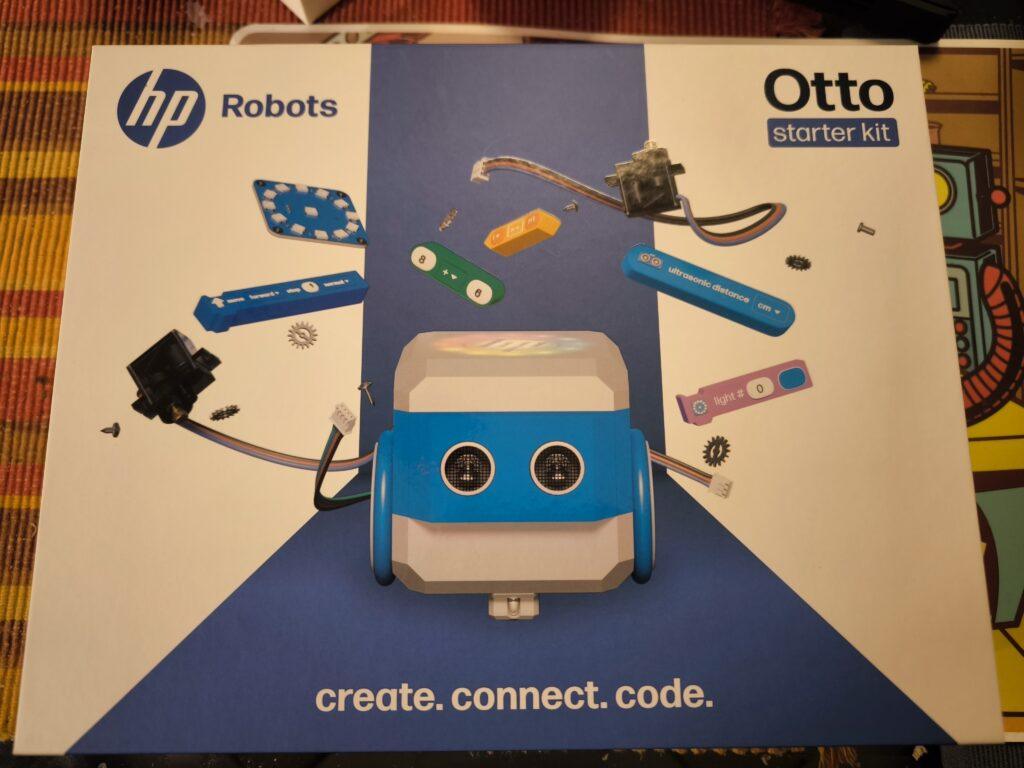

The HP Robots Otto is a versatile, modular robot designed specifically for educational purposes. It offers students and teachers an exciting opportunity to immerse themselves in the world of robotics, 3D printing, electronics and programming. The robot was developed by HP as part of their robotics initiative and is particularly suitable for use in science, technology, engineering and mathematics (STEM) classes.

Key features of Otto:

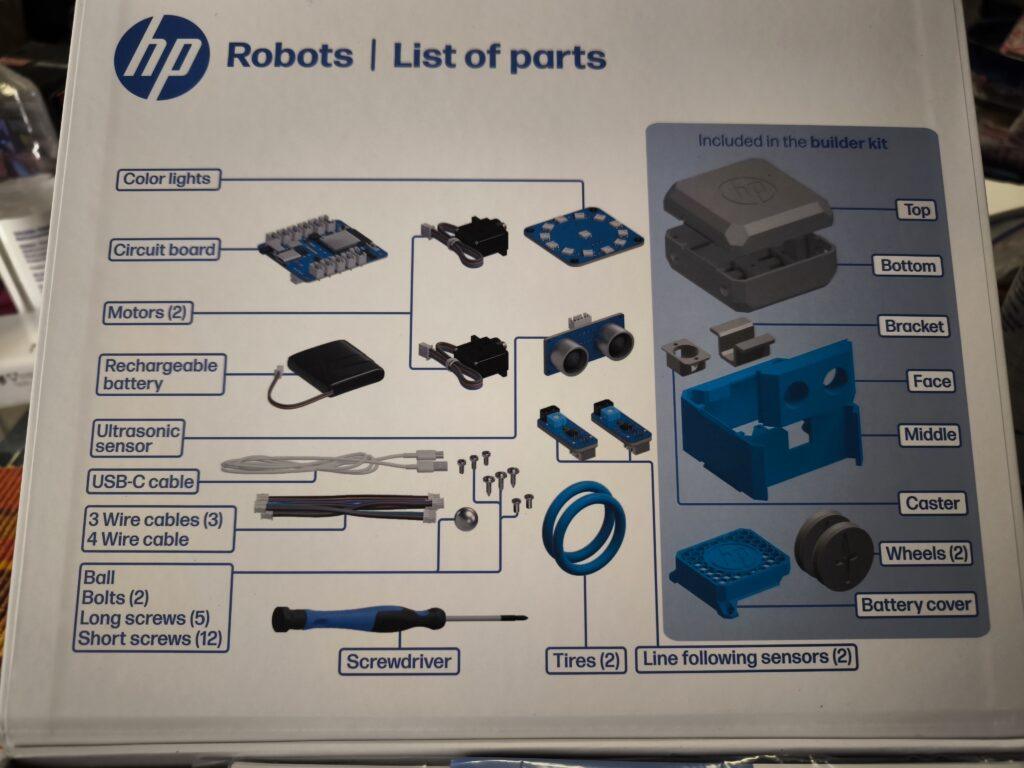

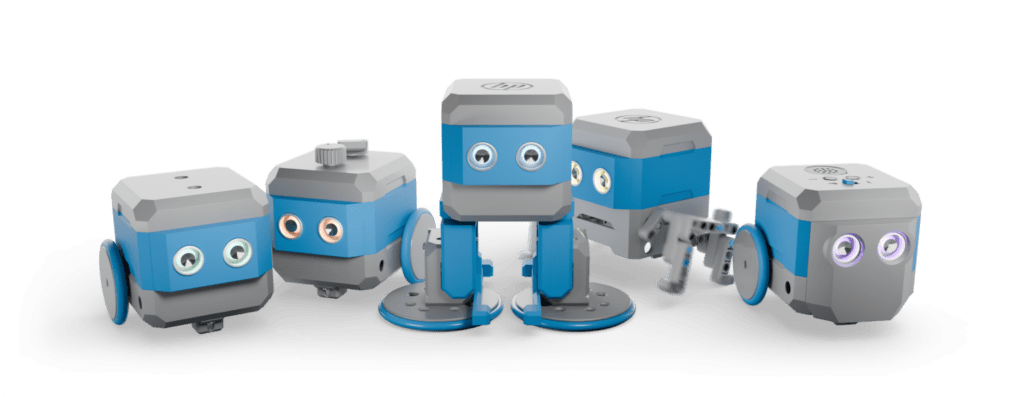

- Modular design: Otto is a modular robot that allows students to build, program and customize it through extensions. This promotes an understanding of technology and creativity. The modular structure allows various components such as motors, sensors and LEDs to be added or replaced, which increases the learning curve for students.

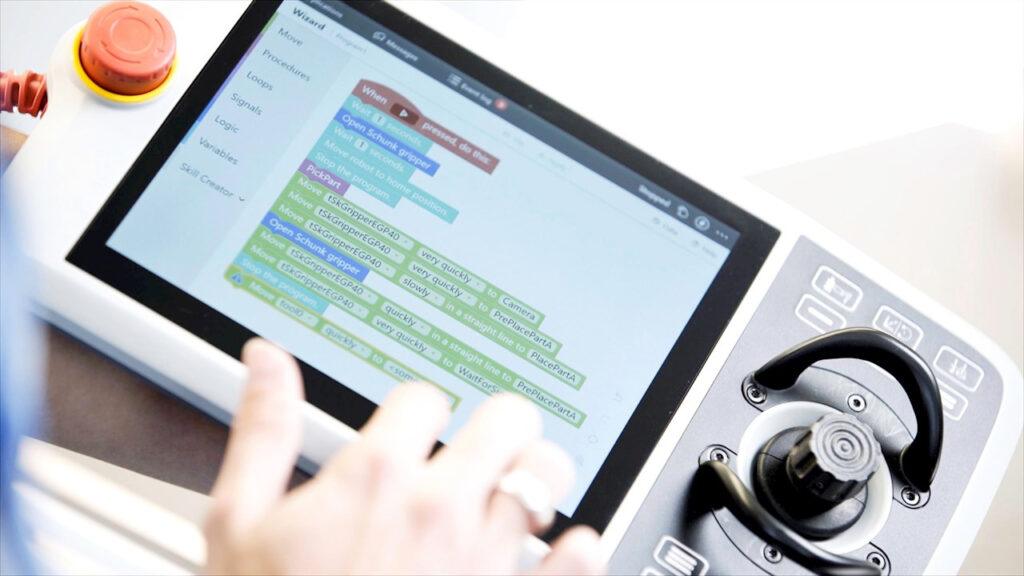

- Programmability: The robot can be programmed with various programming languages, including block-based programming for beginners and Python and C++ for advanced programmers. This diversity allows students to continuously improve their coding skills and adapt to the complexity of the tasks.

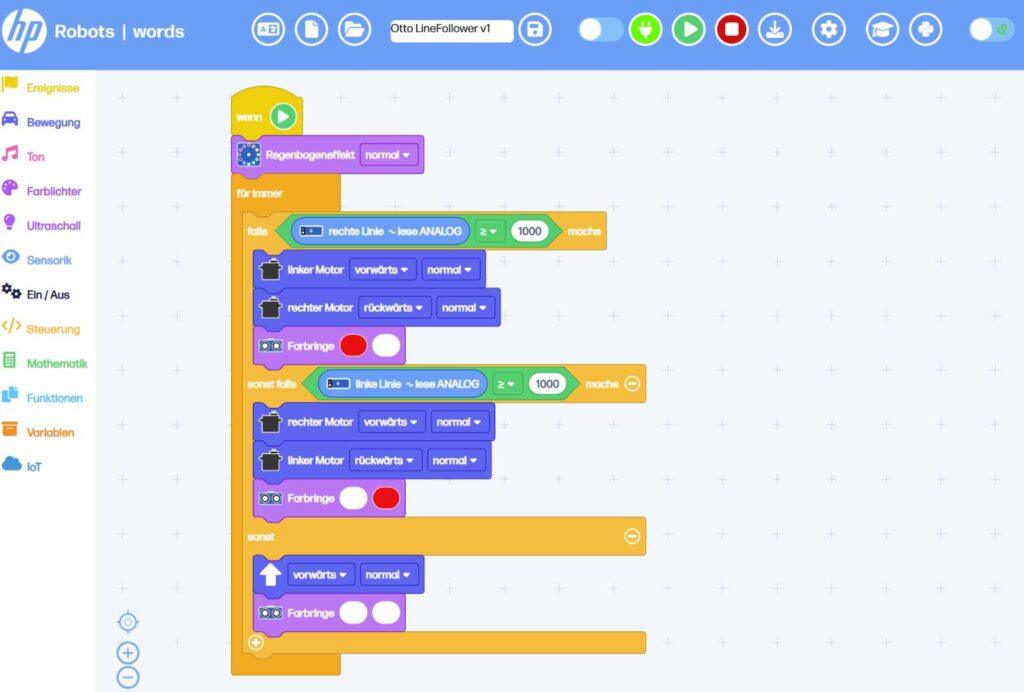

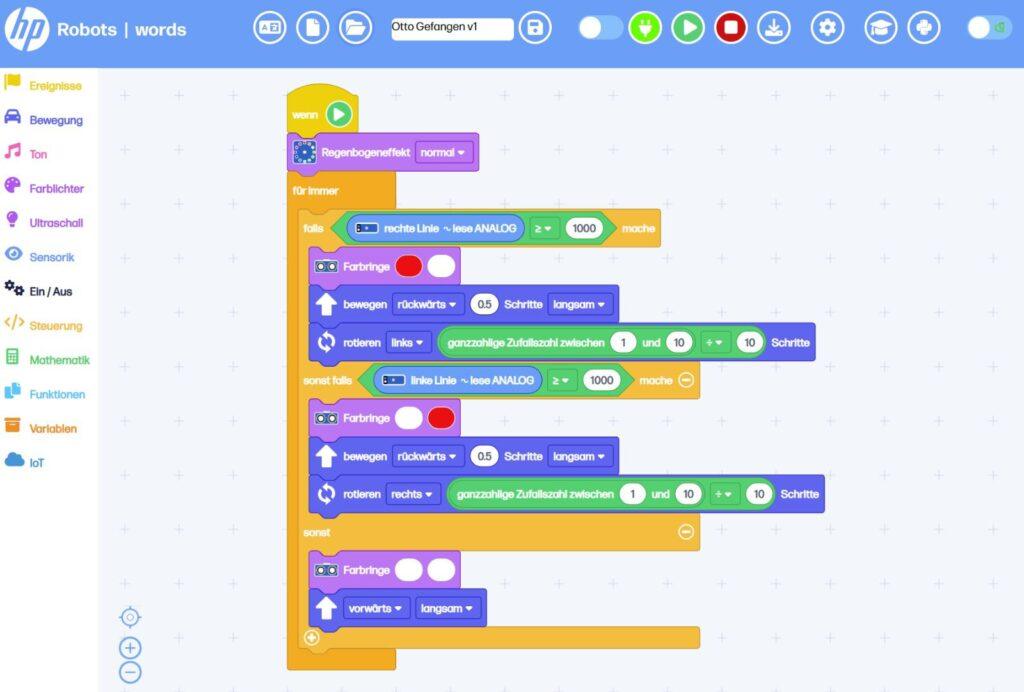

- Sensors and functions: Equipped with ultrasonic sensors for obstacle detection, line tracking sensors and RGB LEDs, Otto offers numerous interactive possibilities. These features allow students to program complex tasks such as navigating courses or tracing lines. The sensors help to detect the environment and react accordingly.

- 3D printing and customizability: Students can design Otto’s outer parts themselves and produce them with a 3D printer. This allows for further personalization and customization of the robot. This creative freedom not only promotes technical understanding, but also artistic skills. Own parts can be designed and sensors can be attached to desired locations.

Educational approach:

Otto is ideal for use in schools and is aimed at students from the age of 8. Younger students can work under supervision, while older students from the age of 14 can also use and expand the robot independently. The kit contains all the necessary components to build a functioning robot, including motors, sensors, and a rechargeable battery.

Programming environments:

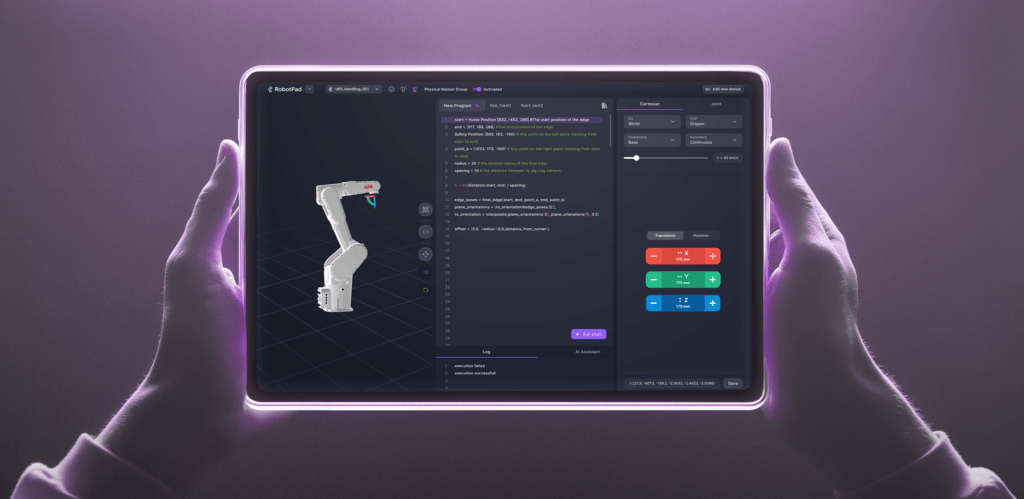

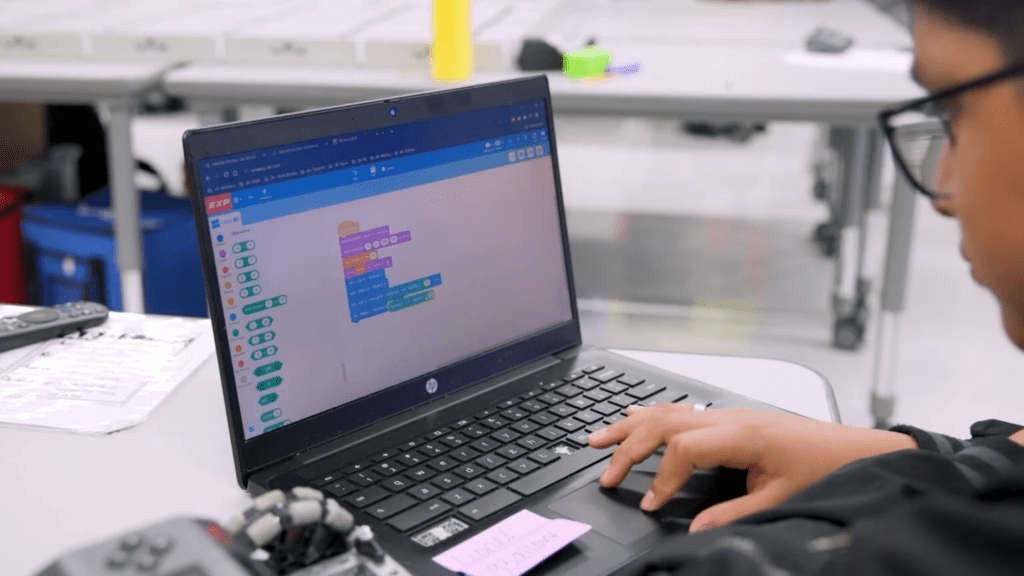

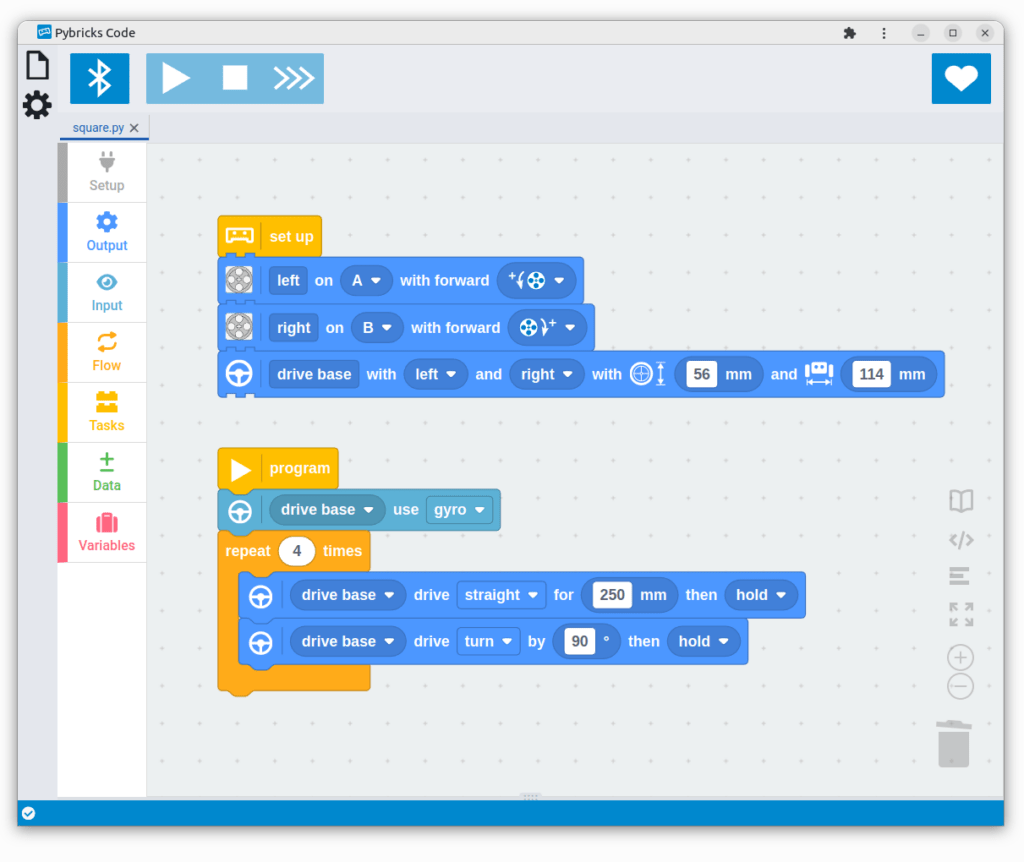

Otto is programmed via a web-based platform that runs on all operating systems. This platform offers different modes:

- Block-based programming: Similar to Scratch Jr., ideal for beginners. This visual programming makes it easier to get started in the world of programming and helps students understand basic concepts such as loops and conditions.

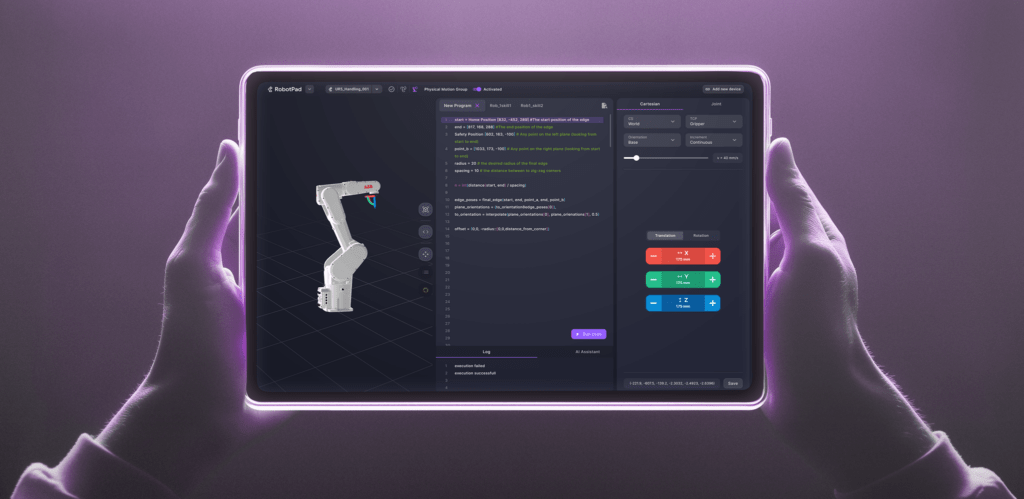

- Python: A Python editor is available for advanced users. Python is a popular language that works well for teaching because it is easy to read and write. Students can use Python to develop more complex algorithms and expand their programming skills.

- C++: Compatible with the Arduino IDE for users who have deeper programming knowledge. C++ offers a high degree of flexibility and allows students to access the hardware directly, allowing for their own advanced projects.

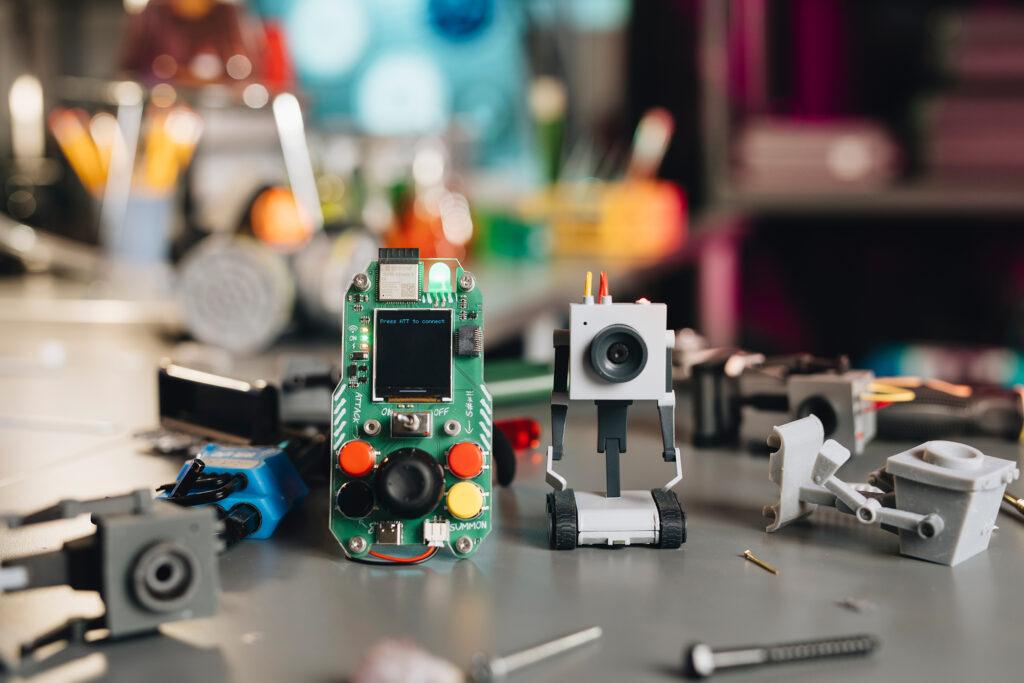

Expansion Kits:

In addition to the Starter Kit, there are several expansion kits. All expansion kits require the starter kit, as they are built on top of it.

Emote Expansion Kit:

- It includes components such as an LED matrix display, OLED display, and an MP3 player that allow the robot to display visual and acoustic responses.

- This kit is particularly suitable for creative projects where Otto should act as an interactive companion.

- The emote kit allows Otto to show emotions, mirror human interactions, and develop different personalities.

Sense Expansion Kit:

- With the Sense Kit, Otto can perceive its surroundings through various sensors.

- Included are sensors for temperature, humidity, light and noise as well as an inclination sensor. These enable a wide range of interactions with the environment.

- The kit is ideal for projects that focus on environmental detection and data analysis.

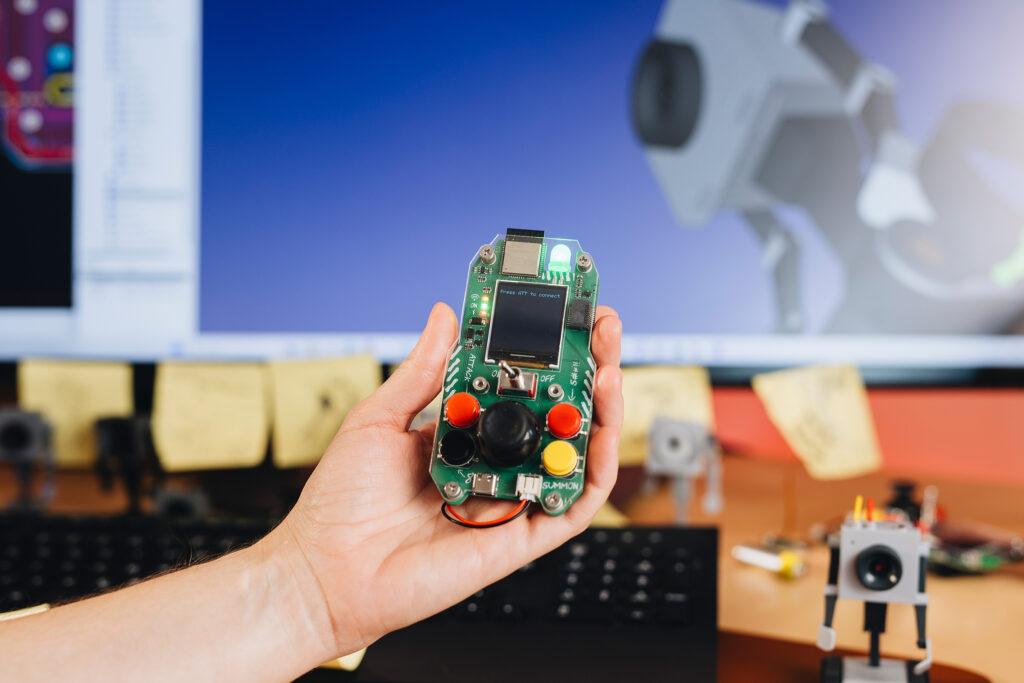

Interact Expansion Kit:

- The Interact kit expands Otto’s tactile interaction capability through modules such as push buttons, rotary knobs and accelerometers.

- It enables precise inputs and reactions, as well as measurement of acceleration.

- This kit is great for playful activities and interactive games.

Invent Expansion Kit:

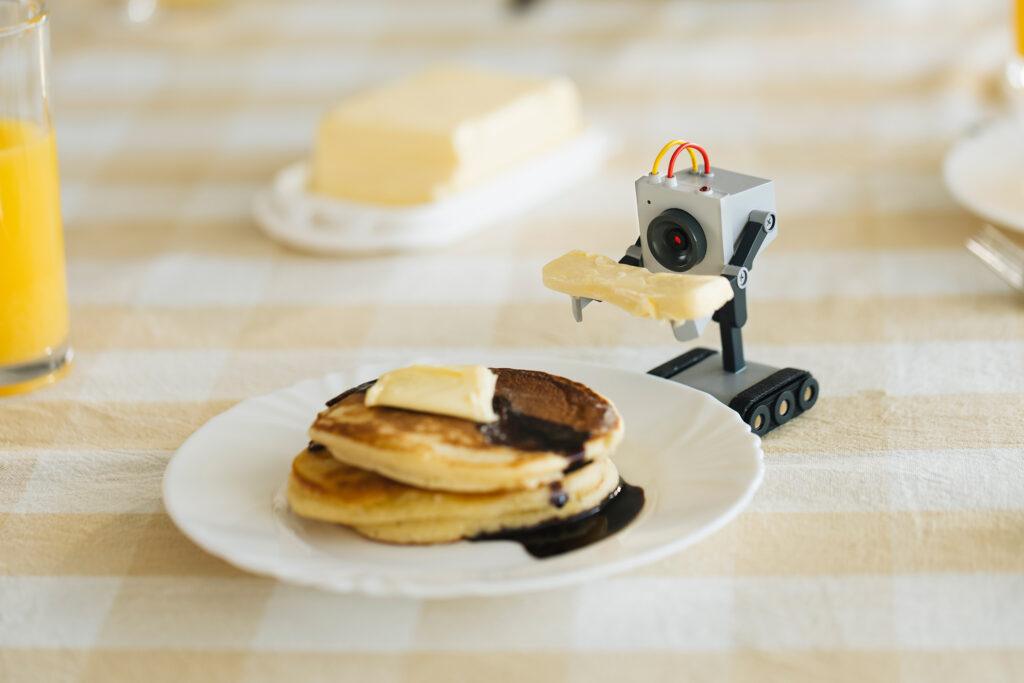

- The Invent kit is specifically designed to encourage users‘ creativity. It allows the individual adaptation of Otto’s functionalities and design through 3D printing and additional modules as well as compatible clamping blocks.

- Users can design and print new accessories to make the robot unique.

- Equip Otto with legs and teach him to walk or make him fit for outdoor use off-road with chains.

Use in the classroom:

Otto comes with extensive resources developed by teachers. These materials help teachers design effective STEM lessons without the need for prior knowledge. The robot can be used both in the classroom and at home. The didactic materials include:

- Curricula: Structured lesson plans that help teachers plan and execute lessons.

- Project ideas and worksheets: A variety of projects that encourage students to think creatively and expand their skills.

- Tutorials and videos: Additional learning materials to help students better understand complex concepts.

Conclusion:

The HP Robots Otto is an excellent tool for fostering technical understanding and creativity in students. Thanks to its modular design and diverse programming options, it offers a hands-on learning experience in the field of robotics and electronics. Ideal for use in schools, Otto provides teachers with a comprehensive platform to accompany students on an exciting journey into the world of technology. In particular, Otto’s versatility through the 3D-printed parts and expansion packs offers the opportunity to build the personal learning robot.