Archiv der Kategorie: Hardware

RBTX Robotik-Marktplatz jetzt in 18 Ländern verfügbar

RBTX powered by igus baut Angebot international weiter aus und macht Low Cost Automation in 10 weiteren Ländern zugänglich

Köln, 23. Januar 2023 – Mit seinem Low Cost Automation Angebot ist igus angetreten, die Einstiegshürden ins Automationszeitalter zu senken. Teil des Angebots ist seit 2019 auch RBTX.com. Der Robotik-Marktplatz hilft Interessierten dabei, so einfach, schnell und kostengünstig wie möglich die passende Automatisierungslösung für ihre individuelle Anwendung zu finden. Bisher war RBTX in acht Ländern verfügbar. Jetzt weitet igus das Angebot aus und startet den Robotik-Marktplatz in zehn zusätzlichen Ländern – drei weitere folgen bald.

Um die eigene Wettbewerbsfähigkeit zu sichern, setzen immer mehr Unternehmen auf die Automatisierung ihrer Prozesse. Die meist hohen Investitionskosten und das fehlende Know-how stellen jedoch nach wie vor oft eine Hürde dar – insbesondere für kleinere und mittelständische Betriebe. Hier kommt RBTX powered by igus ins Spiel: Das Angebot umfasst einen Online-Marktplatz für kostengünstige Robotik-Komponenten und Komplettlösungen, den RBTXpert Remote-Integrationsservice und Customer Testing Areas an verschiedenen Standorten weltweit, wo geplante Kundenanwendungen gemeinsam vor dem Kauf live getestet werden können. Getreu dem Motto: Test before invest. So macht RBTX Automatisierung für Alle zugänglich – ob Bäckerei, Pharmalabor oder Automobil-OEM. „Unser RBTX Online-Marktplatz ist im letzten Jahr stark gewachsen“, sagt Alexander Mühlens, Leiter Geschäftsbereich Automatisierungstechnik und Robotik bei igus. „Gestartet sind wir 2019 in Deutschland. Da unser Angebot sehr gut angenommen wurde und die Nachfrage stetig wächst, haben wir RBTX in den vergangenen Jahren bereits auf Österreich, Frankreich, Großbritannien, die USA, Kanada, Indien und Singapur ausgeweitet. Wir arbeiten jedoch kontinuierlich daran, in weiteren Ländern aktiv zu werden, um Low Cost Automation weltweit zugänglicher zu machen. Daher bieten wir RBTX ab sofort in zehn weiteren Ländern an: Polen, Schweiz, Dänemark, Italien, Japan, China, Taiwan, Südkorea, Vietnam und Thailand. Mit Spanien, Brasilien und der Türkei folgen bald noch drei weitere.”

Schnelle Roboter-Integration mit Remote-Services boomt

Auf RBTX.com haben Interessierte aktuell Zugang zu über 300 einzelnen Robotik-Komponenten von 78 Herstellern sowie über 150 Komplettlösungen aus der Praxis – inklusive garantierter Hardware- und Softwarekompatibilität. Der Online-Marktplatz bietet auch einen Ort, an dem sich Mensch und Roboter begegnen. In der Customer Testing Area können Kunden gemeinsam mit einem RBTXperten, dem Remote-Integrator-Service, die Machbarkeit ihrer geplanten Anwendung testen. Der RBTXpert verbindet sich per Live-Videocall aus dieser Umgebung mit Automatisierungswilligen für eine individuelle Beratung. „Allein in Deutschland führen wir bis zu 30 Projekte pro Woche durch. Auch in vielen anderen Ländern haben wir Customer Testing Areas vor Ort, sodass der RBTXpert in diversen Sprachen und Zeitzonen beraten kann“, betont Alexander Mühlens. „Dadurch bekommen noch mehr Interessierte weltweit direkten Zugang zu einem vielfältigen Angebot für kostengünstige Robotik. So gibt es zum Beispiel Klebeanwendungen bereits ab 5.804 Euro oder Qualitätskontrollanwendungen ab 5.476 Euro.“

Inspiring North West manufacturers with remote robotics

A virtual reality (VR) robotics showcase has been installed at AMRC North West to help the region’s businesses unlock the immense potential of robotic technology to boost their manufacturing processes.

The inclusion of Extend Robotics’ UR5e RoboKit and SenseKit module, is designed to showcase the potential of cutting-edge, accessible robotics technology and used to inspire new approaches to manufacturing.

Aparajithan Sivanathan, head of digital technology at the Advanced Manufacturing Research Centre (AMRC) North West, said: “When introducing new technologies, accessibility is crucial.

“The speed and simplicity of installation, coupled with the easy to use, intuitive VR controls, means Extend Robotics’ solution has immense potential to upgrade manufacturers’ existing robotics.”

AMRC North West is part of the University of Sheffield and a member of the High Value Manufacturing (HVM) Catapult. Its goal is to help the Lancashire region’s manufacturing community access advanced technologies that will drive improvements in productivity, performance and quality.

This latest piece of kit, purchased by the Samlesbury Enterprise Zone-based research centre, was fulfilled as part of Extend Robotics’ inclusion in Universal Robot’s UR+ ecosystem. This ecosystem, which provides access to more than 340 certified kits, components, grippers, software and safety accessories, seamlessly integrates with Universal Robots’ cobots.

Dr Chang Liu, is founder and CEO of Extend Robotics, a company which aims to develop human-robot interface software for non-robotic experts to teleoperate and programme robotic manipulators remotely for physical tasks.

Dr Liu said: “Our installation with AMRC North West demonstrates just how simple it can be to dramatically upgrade your robotics capabilities. In less than an hour we made it possible for their robotic arm to be remote operated from anywhere in the world. We hope this will inspire other manufacturers to explore how we can extend their capability using our technology.”

The set-up of the VR robotics technology by Extend Robotics took just one hour – and was completed using its unique software, seamlessly integrating with AMRC North West’s existing robotics hardware.

For further details about Extend Robotics, visit: www.extendrobotics.com.

Kosmos Robo-Truck

Kosmos Robo-Truck. Find the latest News on robots, drones, AI, robotic toys and gadgets at robots-blog.com. If you want to see your product featured on our Blog, Instagram, Facebook, Twitter or our other sites, contact us. #robots #robot #omgrobots #roboter #robotic #automation #mycollection #collector #robotsblog #collection #botsofinstagram #bot #robotics #robotik #gadget #gadgets #toy #toys #drone #robotsofinstagram #instabots #photooftheday #picoftheday #followforfollow #instadaily #werbung #kosmos #truck #robotruck @kosmos_verlag @kosmos_experimentieren

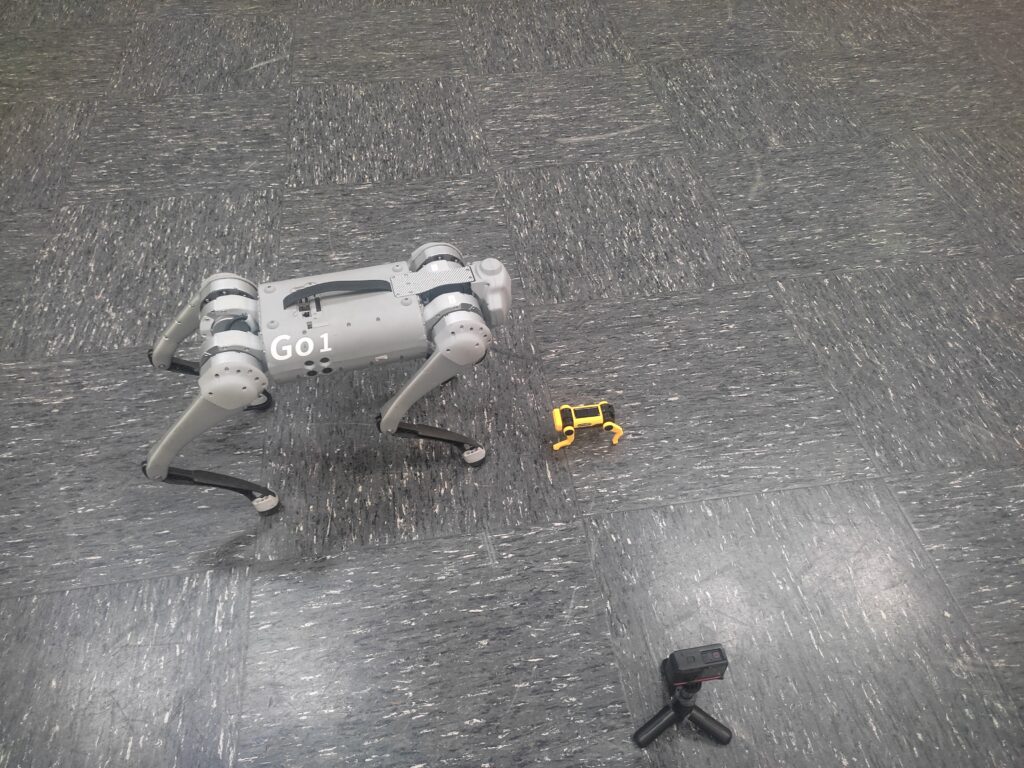

Unitree Quadruped Go 1 meets little robot dog puppy

Unitree Quadruped Go 1 meets little robot dog. Find the latest News on robots, drones, AI, robotic toys and gadgets at robots-blog.com. If you want to see your product featured on our Blog, Instagram, Facebook, Twitter or our other sites, contact us. #robots #robot #omgrobots #roboter #robotic #automation #mycollection #collector #robotsblog #collection #botsofinstagram #bot #robotics #robotik #gadget #gadgets #toy #toys #drone #robotsofinstagram #instabots #photooftheday #picoftheday #followforfollow #instadaily #werbung #unitree #unitreerobotics #quadruped #quadrupedrobotics

ChatGPT schreibt über verschiedene Arten von Robotern

Der folgende Text wurde von ChatGPT erstellt:

Roboter werden in vielen Bereichen eingesetzt und gibt es in den unterschiedlichsten Arten. Einige der häufigsten Robotertypen sind:

Service-Roboter: Service-Roboter sind darauf ausgelegt, Menschen bei verschiedenen Aufgaben zu unterstützen und ihnen das Leben zu erleichtern. Sie werden oft in der Pflege eingesetzt, um beispielsweise alte oder kranke Menschen bei der Körperpflege oder beim Transport zu helfen. Ein Beispiel hierfür ist der Roboter „Paro“, der als Therapie-Roboter in Altersheimen zum Einsatz kommt und durch seine tierähnliche Gestalt und sein Verhalten die soziale Interaktion und das Wohlbefinden der Bewohner verbessern soll.

Service-Roboter können aber auch in anderen Bereichen eingesetzt werden, wie zum Beispiel in der Gastronomie, um Getränke und Speisen zu servieren, oder als Sicherheitsdienst, um Einbruch oder andere Störungen zu verhindern. Ein bekanntes Beispiel hierfür ist der Roboter „Pepper“, der in vielen japanischen Banken und Einkaufszentren zum Einsatz kommt und Kunden begrüßt und Fragen beantwortet.

Eine weitere Anwendung von Service-Robotern ist der Transport von Gütern oder Personen. Hierfür gibt es beispielsweise den Roboter „Starship“, der in einigen Städten zum Einsatz kommt und Lebensmittel oder andere Güter innerhalb eines festgelegten Bereichs zustellen kann.

Unterhaltungsroboter: Unterhaltungsroboter dienen in erster Linie der Unterhaltung und sind meist weniger leistungsfähig als andere Robotertypen. Sie können in Form von Spielzeug für Kinder auftreten, wie beispielsweise der Roboter „Anki Cozmo“, der verschiedene Aufgaben lösen und Spiele mit seinen Benutzern spielen kann.

Unterhaltungsroboter können aber auch als Haustier-Ersatz dienen, insbesondere für Menschen, die aus gesundheitlichen Gründen kein echtes Haustier halten können oder wollen. Ein bekanntes Beispiel hierfür ist der Roboter „Aibo“, der von Sony entwickelt wurde und als elektronischer Hund verkauft wird. Aibo kann verschiedene Befehle ausführen und sich durch sein Verhalten wie ein echter Hund verhalten.

Unterhaltungsroboter können auch auf Messen oder anderen Veranstaltungen als Attraktion und Besuchermagnet dienen.

Industrielle Roboter: Industrielle Roboter werden in der Industrie eingesetzt, um bestimmte Aufgaben zu erledigen, die für Menschen zu schwierig, gefährlich oder monoton sind. Sie werden oft in der Automobilindustrie, in der Elektronikfertigung oder in der chemischen Industrie eingesetzt.

Industrielle Roboter gibt es in verschiedenen Ausführungen, von denen einige sehr groß und andere sehr klein sind. Sie können mit verschiedenen Werkzeugen ausgestattet werden, um unterschiedliche Aufgaben erledigen zu können. Beispiele für industrielle Roboter sind Schraub- und Lösewerkzeuge, Schweißgeräte oder Lackierpistolen.

Industrielle Roboter sind in der Regel sehr robust und zuverlässig und können 24 Stunden am Tag arbeiten. Sie werden oft in Produktionslinien eingesetzt, um bestimmte Aufgaben zu erledigen, die immer wiederkehren. Durch den Einsatz von Industrierobotern können Unternehmen ihre Produktivität erhöhen und Fehler reduzieren.

Software Roboter: Software Roboter, auch „Bots“ genannt, werden in der Regel im Bereich der Software-Entwicklung eingesetzt. Sie können eingesetzt werden, um Routineaufgaben automatisch zu erledigen oder um die Interaktion mit Benutzern zu erleichtern.

Ein bekanntes Beispiel für einen Software Roboter ist der Chatbot, der in vielen Unternehmen eingesetzt wird, um Kundenanfragen automatisch zu beantworten. Chatbots können beispielsweise in Kundenservice-Portalen oder auf Social Media Plattformen eingesetzt werden, um Fragen von Benutzern zu beantworten oder Hilfe anzubieten.

Eine weitere Anwendung von Software Robotern ist der Einsatz als virtual personal assistant, der beispielsweise in Smartphones oder Smart Home Geräten integriert sein kann. Diese Roboter können dem Benutzer bei der Organisation seines Tagesablaufs oder der Steuerung von Geräten im Haushalt helfen.

Insgesamt gibt es viele verschiedene Arten von Robotern, die für unterschiedliche Aufgaben eingesetzt werden können. Sie alle haben ihre eigenen Stärken und Schwächen und eignen sich für bestimmte Anwendungen besser als andere. Es ist wichtig zu beachten, dass der Einsatz von Robotern

Ein wichtiger Aspekt bei der Auswahl eines geeigneten Roboters ist die Kosten. Service-Roboter und Unterhaltungsroboter sind in der Regel für den Endverbraucher bestimmt und können daher recht teuer sein. Industrielle Roboter hingegen sind meist kostenintensiver in der Anschaffung, können aber durch ihre hohe Leistungsfähigkeit und Zuverlässigkeit auf lange Sicht Kosten einsparen. Software Roboter hingegen sind in der Regel kostengünstiger, da sie in der Regel als Softwarelösung bereitgestellt werden und keine Hardware benötigen.

Ein weiterer Faktor bei der Auswahl eines Roboters ist seine Leistungsfähigkeit und Zuverlässigkeit. Industrielle Roboter sind in der Regel sehr leistungsfähig und zuverlässig, da sie für den Einsatz in der Industrie entwickelt wurden und hohen Belastungen standhalten müssen. Service-Roboter und Unterhaltungsroboter hingegen sind oft weniger leistungsfähig, aber dennoch in der Lage, bestimmte Aufgaben zu erledigen. Software Roboter hingegen sind in der Regel sehr zuverlässig, solange sie korrekt programmiert wurden.

Ein weiteres wichtiges Kriterium bei der Auswahl eines Roboters ist seine Flexibilität und Anpassungsfähigkeit. Industrielle Roboter sind meist sehr spezialisiert und auf bestimmte Aufgaben ausgelegt, während Service-Roboter und Unterhaltungsroboter oft flexibler und anpassungsfähiger sind. Software Roboter hingegen können in der Regel relativ einfach angepasst werden, solange die notwendigen Programmierkenntnisse vorhanden sind.

Abschließend lässt sich sagen, dass es viele verschiedene Arten von Robotern gibt, die für unterschiedliche Aufgaben eingesetzt werden können. Die Wahl des geeigneten Roboters hängt von vielen Faktoren ab, wie beispielsweise Kosten, Leistungsfähigkeit, Zuverlässigkeit, Flexibilität und Anpassungsfähigkeit. Es ist wichtig, sorgfältig zu überlegen, welcher Robotertyp am besten für die geplante Anwendung geeignet ist.

Market launch: New Ensenso N models for 3D and robot vision

Upgraded Ensenso 3D camera series now available at IDS

Resolution and accuracy have almost doubled, the price has remained the same – those who choose 3D cameras from the Ensenso N series can now benefit from more advanced models. The new stereo vision cameras (N31, N36, N41, N46) can now be purchased from IDS Imaging Development Systems.

The Ensenso N 3D cameras have a compact housing (made of aluminium or plastic composite, depending on the model) with an integrated pattern projector. They are suitable for capturing both static and moving objects. The integrated projector projects a high-contrast texture onto the objects in question. A pattern mask with a random dot pattern complements non-existing or only weakly visible surface structures. This allows the cameras to deliver detailed 3D point clouds even in difficult lighting conditions.

With the Ensenso models N31, N36, N41 and N46, IDS is now launching the next generation of the previously available N30, N35, N40 and N45. Visually, the cameras do not differ from their predecessors. They do, however, use a new sensor from Sony, the IMX392. This results in a higher resolution (2.3 MP instead of 1.3 MP). All cameras are pre-calibrated and therefore easy to set up. The Ensenso selector on the IDS website helps to choose the right model.

Whether firmly installed or in mobile use on a robot arm: with Ensenso N, users opt for a 3D camera series that provides reliable 3D information for a wide range of applications. The cameras prove their worth in single item picking, for example, support remote-controlled industrial robots, are used in logistics and even help to automate high-volume laundries. IDS provides more in-depth insights into the versatile application possibilities with case studies on the company website.

Learn more: https://en.ids-imaging.com/ensenso-3d-camera-n-series.html

Robots-Blog wishes a merry X-Mas and a happy new year!

Robots-Blog wishes a merry X-Mas and a happy new year! Thanks @mybotshopgermany for lending the Unitree Quadruped Go1 robot! Find the latest News on robots, drones, AI, robotic toys and gadgets at robots-blog.com #robots #robot #omgrobots #roboter #robotic #mycollection #collector #robotsblog #collection #botsofinstagram #bot #robotics #robotik #gadget #gadgets #toy #toys #drone #photooftheday #picoftheday #followforfollow #drones #timelapse #robotbuilder #robotexpert #robotmaker #robotsofyoutube #cobots #cobot #automation #werbung #unitree #quadruped #mindstorms #mindstormsmagic #xmas #christmas #lego #unitree #unitreerobotics @unitreerobotics7482 @unitreesupport5358 @mybotshopgermany2757 @LEGO @LEGOEducation

Keep moving

Communication & Safety Challenges Facing Mobile Robots Manufacturers

Mobile robots are everywhere, from warehouses to hospitals and even on the street. Their popularity is easy to understand; they’re cheaper, safer, easier to find, and more productive than actual workers. They’re easy to scale or combine with other machines. As mobile robots collect a lot of real-time data, companies can use mobile robots to start their IIoT journey.

But to work efficiently, mobile robots need safe and reliable communication. This article outlines the main communication and safety challenges facing mobile robot manufacturers and provides an easy way to overcome these challenges to keep mobile robots moving.

What are Mobile Robots?

Before we begin, let’s define what we mean by mobile robots.

Mobile robots transport materials from one location to another and come in two types, automated guided vehicles (AGVs) and autonomous mobile robots (AMRs). AGVs use guiding infrastructure (wires reflectors, reflectors, or magnetic strips) to follow predetermined routes. If an object blocks an AGV’s path, the AGV stops and waits until the object is removed.

AMRs are more dynamic. They navigate via maps and use data from cameras, built-in sensors, or laser scanners to detect their surroundings and choose the most efficient route. If an object blocks an AMR’s planned route, it selects another route. As AMRs are not reliant on guiding infrastructure, they’re quicker to install and can adapt to logistical changes.

What are the Communication and Safety Challenges Facing Mobile Robot Manufacturers?

1. Establish a Wireless Connection

The first challenge for mobile robot manufacturers is to select the most suitable wireless technology. The usual advice is to establish the requirements, evaluate the standards, and choose the best match. Unfortunately, this isn’t always possible for mobile robot manufacturers as often they don’t know where the machine will be located or the exact details of the target application.

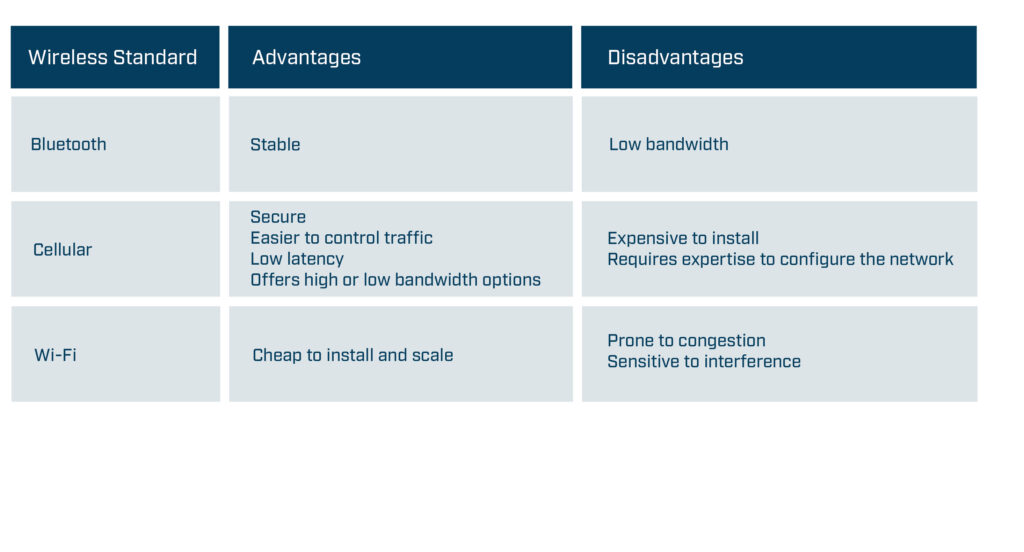

Sometimes a Bluetooth connection will be ideal as it offers a stable non-congested connection, while other applications will require a high-speed, secure cellular connection. What would be useful for mobile robot manufacturers is to have a networking technology that’s easy to change to meet specific requirements.

Wireless standard -high-level advantages and disadvantages

The second challenge is to ensure that the installation works as planned. Before installing a wireless solution, complete a predictive site survey based on facility drawings to ensure the mobile robots have sufficient signal coverage throughout the location. The site survey should identify the optimal location for the Access Points, the correct antenna type, the optimal antenna angle, and how to mitigate interference. After the installation, use wireless sniffer tools to check the design and adjust APs or antenna as required.

2. Connecting Mobile Robots to Industrial Networks

Mobile robots need to communicate with controllers at the relevant site even though the mobile robots and controllers are often using different industrial protocols. For example, an AGV might use CANopen while the controller might use PROFINET. Furthermore, mobile robot manufacturers may want to use the same AGV model on a different site where the controller uses another industrial network, such as EtherCAT.

Mobile robot manufacturers also need to ensure that their mobile robots have sufficient capacity to process the required amount of data. The required amount of data will vary depending on the size and type of installation. Large installations may use more data as the routing algorithms need to cover a larger area, more vehicles, and more potential routes. Navigation systems such as vision navigation process images and therefore require more processing power than installations using other navigation systems such as reflectors. As a result, mobile robot manufacturers must solve the following challenges:

- They need a networking technology that supports all major fieldbus and industrial Ethernet networks.

- It needs to be easy to change the networking technology to enable the mobile robot to communicate on the same industrial network as the controller without changing the hardware design.

- They need to ensure that the networking technology has sufficient capacity and functionality to process the required data.

3. Creating a Safe System

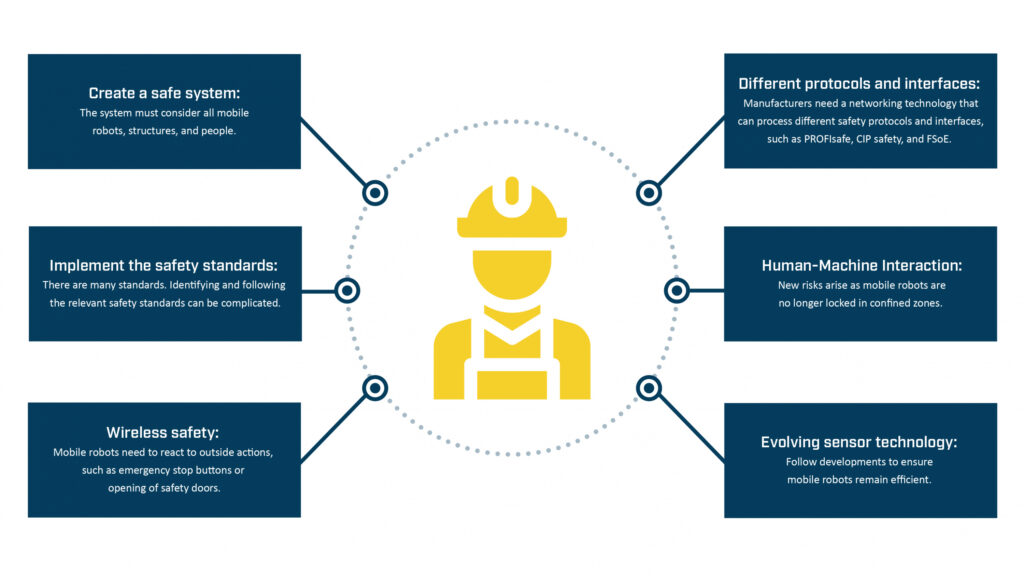

Creating a system where mobile robots can safely transport material is a critical but challenging task. Mobile robot manufacturers need to create a system that considers all the diverse types of mobile robots, structures, and people in the environment. They need to ensure that the mobile robots react to outside actions, such as someone opening a safety door or pushing an emergency stop button, and that the networking solution can process different safety protocols and interfaces. They need to consider that AMRs move freely and manage the risk of collisions accordingly. The technology used in sensors is constantly evolving, and mobile robot manufacturers need to follow the developments to ensure their products remain as efficient as possible.

Overview of Safety Challenges for Mobile Robot Manufacturers

Safety Standards

The safety standards provide guidelines on implementing safety-related components, preparing the environment, and maintaining machines or equipment.

While compliance with the different safety standards (ISO, DIN, IEC, ANSI, etc.) is mostly voluntary, machine builders in the European Union are legally required to follow the safety standards in the machinery directives. Machinery directive 2006/42/EC is always applicable for mobile robot manufacturers, and in some applications, directive 2014/30/EU might also be relevant as it regulates the electromagnetic compatibility of equipment. Machinery directive 2006/42/EC describes the requirements for the design and construction of safe machines introduced into the European market. Manufacturers can only affix a CE label and deliver the machine to their customers if they can prove in the declaration of conformity that they have fulfilled the directive’s requirements.

Although the other safety standards are not mandatory, manufacturers should still follow them as they help to fulfill the requirements in machinery directive 2006/42/EC. For example, manufacturers can follow the guidance in ISO 12100 to reduce identified risks to an acceptable residual risk. They can use ISO 13849 or IEC 62061 to find the required safety level for each risk and ensure that the corresponding safety-related function meets the defined requirements. Mobile robot manufacturers decide how they achieve a certain safety level. For example, they can decrease the speed of the mobile robot to lower the risk of collisions and severity of injuries to an acceptable level. Or they can ensure that mobile robots only operate in separated zones where human access is prohibited (defined as confined zones in ISO 3691-4).

Identifying the correct standards and implementing the requirements is the best way mobile manufacturers can create a safe system. But as this summary suggests, it’s a complicated and time-consuming process.

4. Ensuring a Reliable CAN Communication

A reliable and easy-to-implement standard since the 1980s, communication-based on CAN technology is still growing in popularity, mainly due to its use in various booming industries, such as E-Mobility and Battery Energy Storage Systems (BESS). CAN is simple, energy and cost-efficient. All the devices on the network can access all the information, and it’s an open standard, meaning that users can adapt and extend the messages to meet their needs.

For mobile robot manufacturers, establishing a CAN connection is becoming even more vital as it enables them to monitor the lithium-ion batteries increasingly used in mobile robot drive systems, either in retrofit systems or in new installations. Mobile robot manufacturers need to do the following:

1.Establish a reliable connection to the CAN or CANopen communication standards to enable them to check their devices, such as monitoring the battery’s status and performance.

2. Protect systems from electromagnetic interference (EMI), as EMI can destroy a system’s electronics. The risk of EMI is significant in retrofits as adding new components, such as batteries next to the communication cable, results in the introduction of high-frequency electromagnetic disturbances.

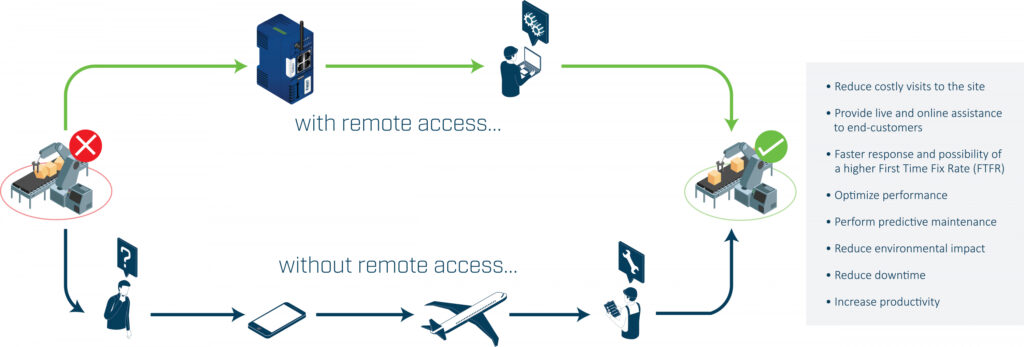

5. Accessing Mobile Robots Remotely

The ability to remotely access a machine’s control system can enable mobile robot vendors or engineers to troubleshoot and resolve most problems without traveling to the site.

Benefits of Remote Access

The challenge is to create a remote access solution that balances the needs of the IT department with the needs of the engineer or vendor.

The IT department wants to ensure that the network remains secure, reliable, and retains integrity. As a result, the remote access solution should include the following security measures:

- Use outbound connections rather than inbound connections to keep the impact on the firewall to a minimum.

- Separate the relevant traffic from the rest of the network.

- Encrypt and protect all traffic to ensure its confidentiality and integrity.

- Ensure that vendors work in line with or are certified to relevant security standards such as ISO 27001

- Ensure that suppliers complete regular security audits.

The engineer or vendor wants an easy-to-use and dependable system. It should be easy for users to connect to the mobile robots and access the required information. If the installation might change, it should be easy to scale the number of robots as required. If the mobile robots are in a different country from the vendors or engineers, the networking infrastructure must have sufficient coverage and redundancy to guarantee availability worldwide.

Conclusion

As we’ve seen, mobile robot manufacturers must solve many communication and safety challenges. They must establish a wireless connection, send data over different networks, ensure safety, connect to CAN systems, and securely access the robots remotely. And to make it more complicated, each installation must be re-assessed and adapted to meet the on-site requirements.

Best practice to implement mobile robot communication

Mobile robot manufacturers are rarely communication or safety experts. Subsequently, they can find it time-consuming and expensive to try and develop the required communication technology in-house. Enlisting purpose-built third-party communication solutions not only solves the communication challenges at hand, it also provides other benefits.

Modern communication solutions have a modular design enabling mobile robot manufacturers to remove one networking product designed for one standard or protocol and replace it with a product designed for a different standard or protocol without impacting any other part of the machine. For example, Bluetooth may be the most suitable wireless standard in one installation, while Wi-Fi may provide better coverage in another installation. Similarly, one site may use the PROFINET and PROFIsafe protocols, while another may use different industrial and safety protocols. In both scenarios, mobile robot manufacturers can use communication products to change the networking technology to meet the local requirements without making any changes to the hardware design.

Authors:

Mark Crossley, Daniel Heinzler, Fredrik Brynolf, Thomas Carlsson

HMS Networks

HMS Networks is an industrial communication expert based in Sweden, providing several solutions for AGV communication. Read more on www.hms-networks.com/agv

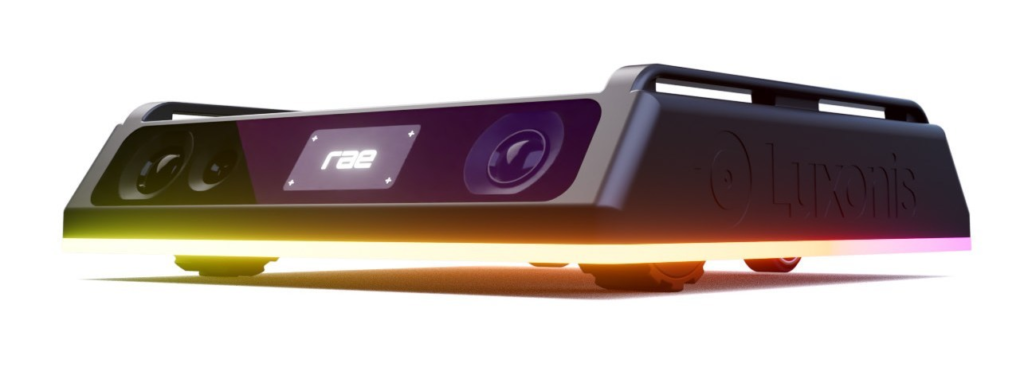

Robotic Vision Platform Luxonis Announces its First Open Source Personal Robot, rae

LITTLETON, Colo., 2022 /PRNewswire/ — Luxonis, a Colorado-based robotic vision platform, is thrilled to introduce rae, its first fully-formed and high-powered personal robot. Backed by a Kickstarter campaign to help support its development, rae sets itself apart by offering a multitude of features right out of the box, along with a unique degree of experimental programming potential that far exceeds other consumer robots on the market. The most recent of a long line of Luxonis innovations, rae is designed to make robotics accessible and simple for users of any experience level.

„rae is representative of our foremost goal at Luxonis: to make robotics accessible and simple for anyone, not just the tenured engineer with years of programming experience,“ said Brandon Gilles, CEO of Luxonis. „A longstanding truth about robotics is that the barrier to entry sometimes feels impossibly high, but it doesn’t have to be that way. By creating rae, we want to help demonstrate the kinds of positive impacts robotics can bring to all people’s lives, whether it’s as simple as helping you find your keys, talking with your friend who uses American Sign Language, or playing with your kids.“

At its core, rae is a world-class robot, and includes AI, computer vision and machine learning all on-device. Building upon the technology of the brand’s award-winning OAK cameras, rae offers stereo depth, object tracking, motion estimation, 9-axis IMU data, neural inference, corner detection, motion zooming, and is fully compatible with the DepthAI API. With next-generation simultaneous localization and mapping (SLAM), rae can map out and navigate through unknown environments, and is preconfigured with ROS 2 for access to its robust collection of software and applications.

Featuring a full suite of standard applications, rae offers games like follow me and hide and seek, and useful tools like barcode/QR code scanning, license plate reading, fall detection, time-lapse recording, emotion recognition, object finding, sign language interpretation, and a security alert mode. All applications are controllable through rae’s mobile app, which users can use from anywhere around the world. They can also link with Luxonis‘ cloud platform, RobotHub, to manage customizations, download and install user-created applications, and collaborate with Luxonis‘ user community.

Crowdfunding campaigns and developing trailblazing products that roboticists love is something that is in Luxonis‘ DNA, which is made evident by its established track record of two previously successful campaigns. Luxonis‘ first Kickstarter for the OpenCV AI Kit in 2021 raised $1.3 million with 6,564 backers, and the second for the OAK-D Lite raised $1.02 million with 7,988 backers. With the support of robot hobbyists and brand loyalists, as well as new target backers such as educators, students, and parents, rae is en route to leave an impact as a revolutionary personal robot that isn’t limited to niche demographics.

Pricing for rae starts at $299 and international shipping is available.

Interested backers can learn more about the campaign here.

For more information about Luxonis, visit https://www.luxonis.com/

About Luxonis:

The mission at Luxonis is robotic vision, made simple. They are working to improve the engineering efficiency of the world through their industry leading and award winning camera systems, which embed AI, CV, and machine learning into a high performing, compact package. Luxonis offers full-stack solutions stretching from hardware, firmware, software, and a growing cloud-based management platform, and prioritizes customer success above all else through the continued development of their DepthAI ecosystem.